Test Analysis & Insights

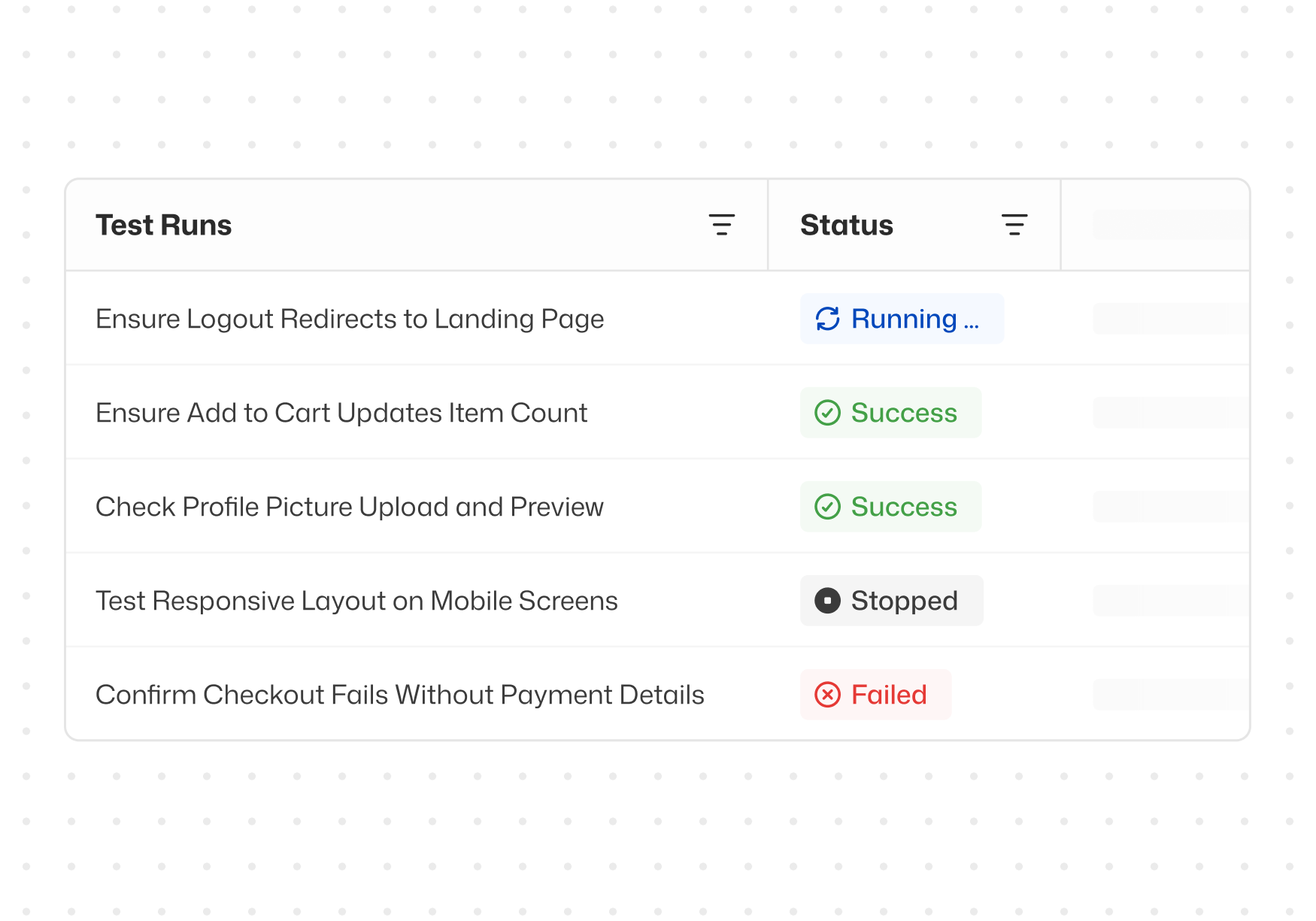

Instant failure analysis. Reporting you can share. Thunders turns every test run into actionable insight so your team can make confident release decisions - communicate quality across the org - and improve with every run.

Why choose Thunders for Test Analysis & Insights

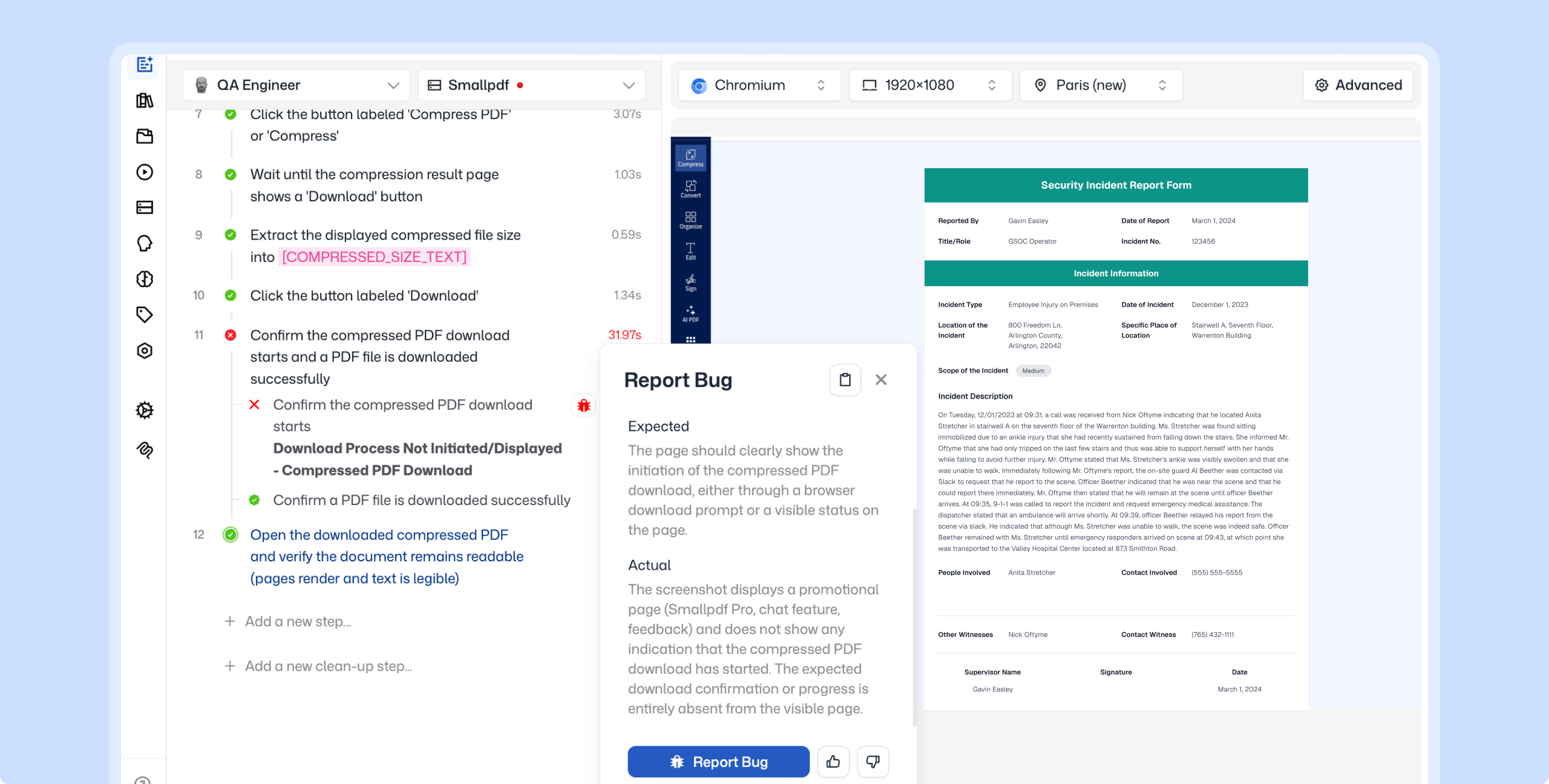

Go from test failure to root cause in seconds

When a test step fails, the AI persona that executed it explains exactly what happened, what was expected, and where the mismatch occurred.

Full visibility into what the AI persona did at every step

Every action the AI persona takes is traced and explained. You see how each step was interpreted, what decisions were made, and why. Complete transparency into how your tests run.

One-click bug reports with full failure context

Turn any failed step into a bug report in Jira, Linear, or Azure DevOps with one click. The report arrives pre-filled with screenshots, expected vs. actual results, and the full execution path.

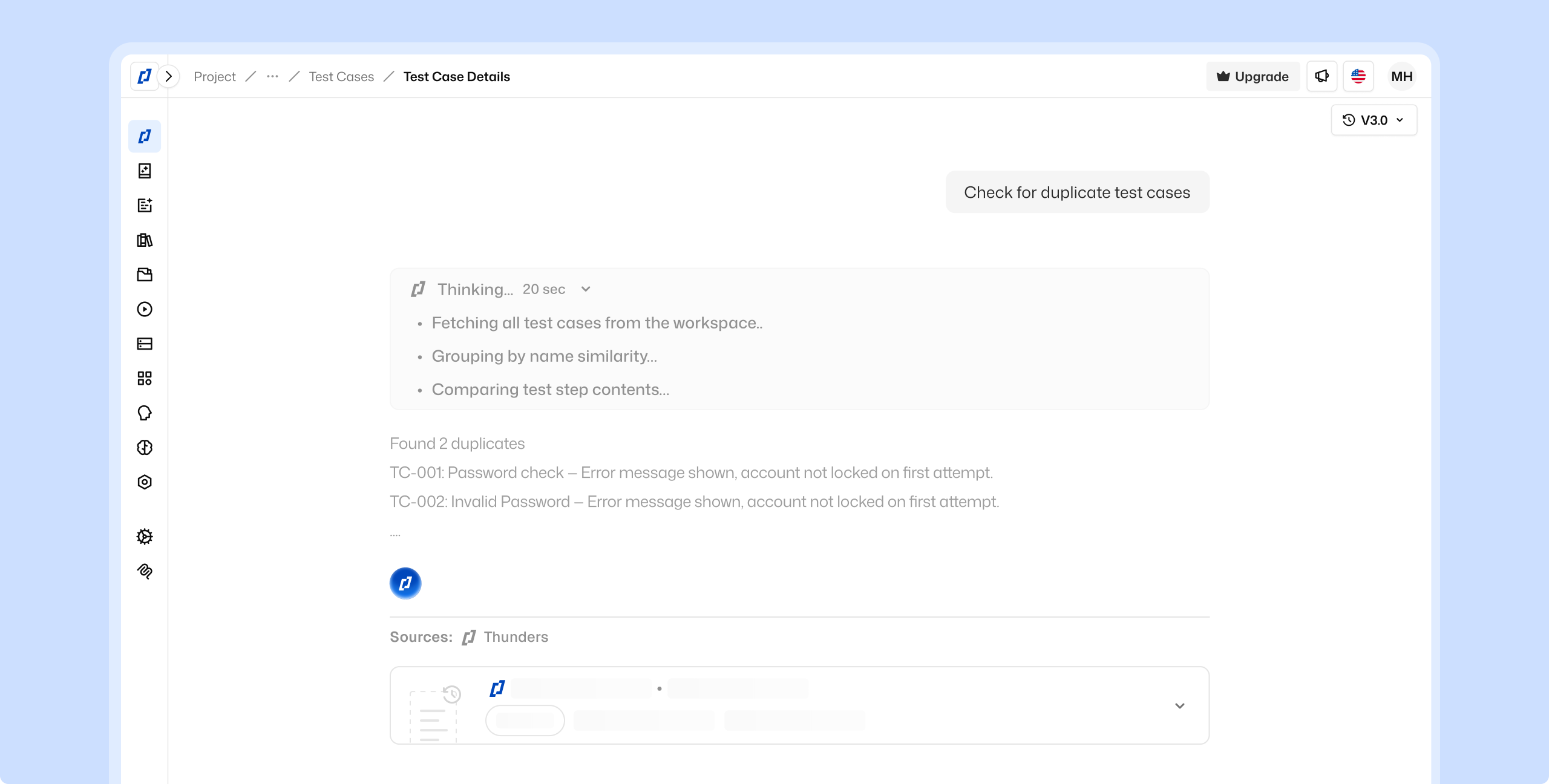

Get a second pair of eyes on every test with Thunders Copilot

Thunders Copilot reviews your test descriptions like a senior QA would. It helps sharpen intent so your tests produce more accurate results over time.

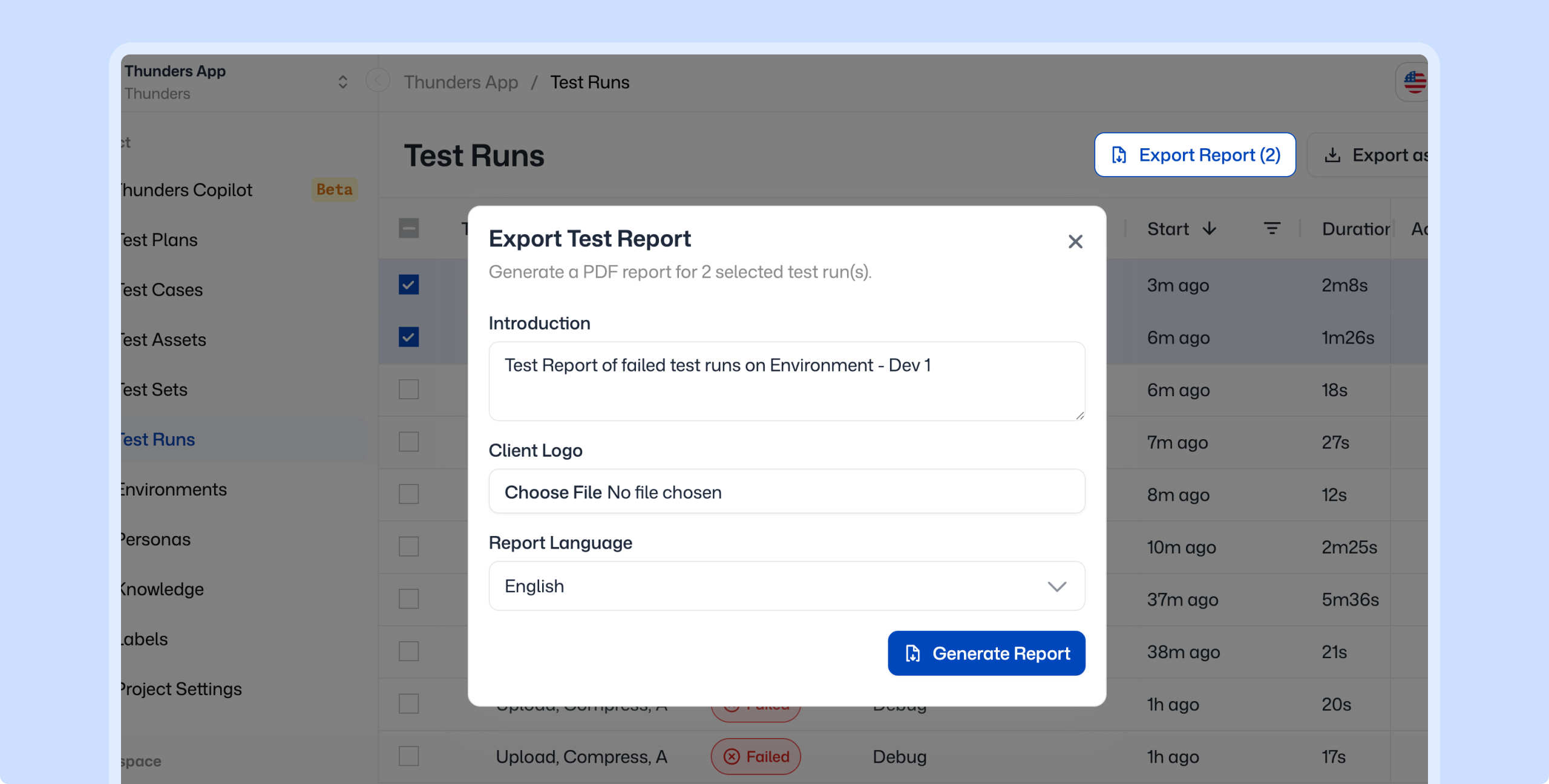

Export execution results as shareable reports

Export test results with step outcomes, screenshots, and pass/fail summary. Share them with product, engineering, or leadership.

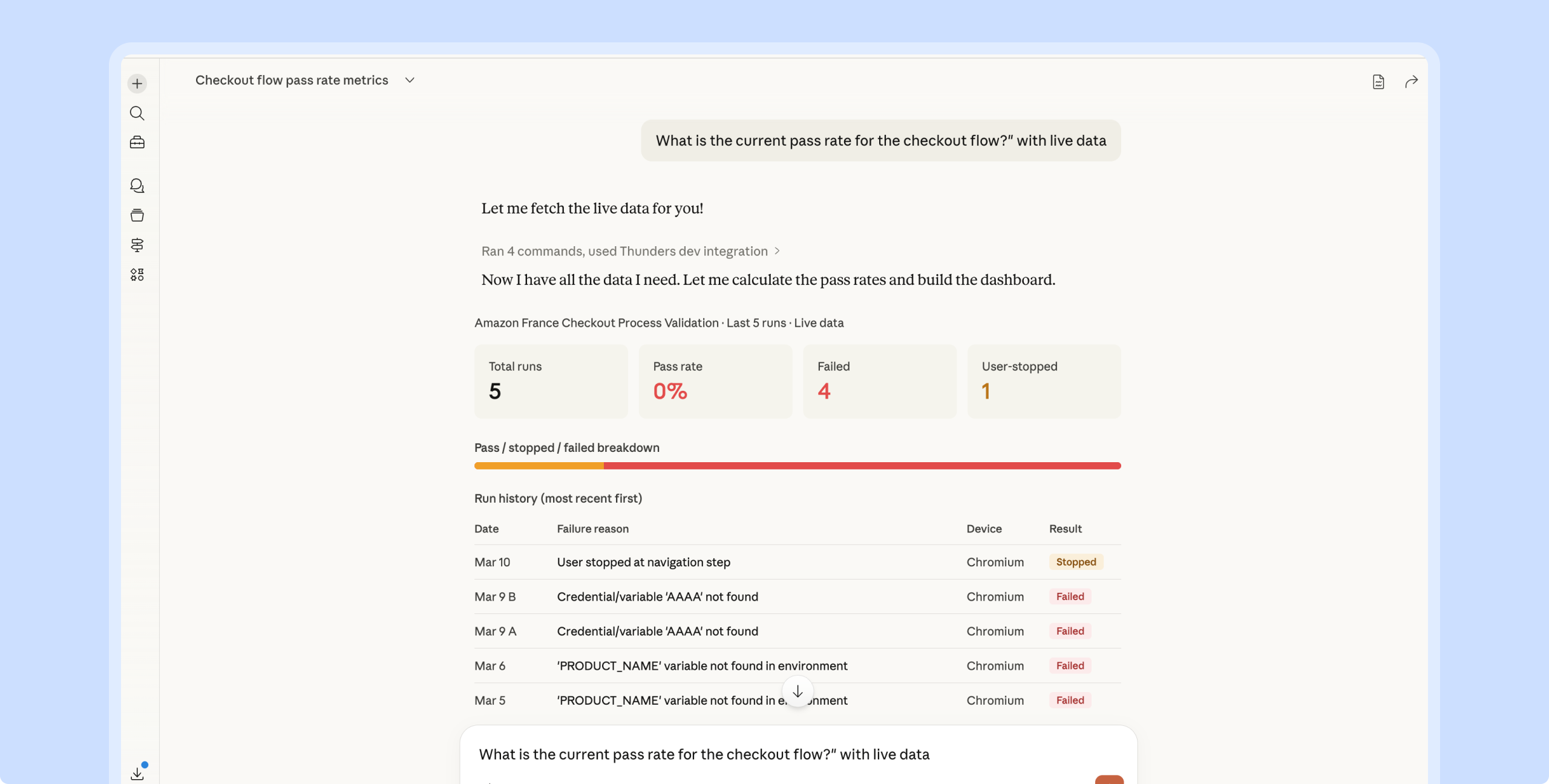

Build custom dashboards and analytics through Thunders MCP

Connect any MCP-compatible AI assistant to your Thunders account. Build interactive dashboards from test data, and get the answer in seconds.

Test Analysis & Insights Use Cases

A test in the regression suite failed. The AI persona that executed it shows which step failed - what it expected - and why. One click turns it into a bug report in Jira or Linear - ready to triage.

Product and engineering need to know where quality stands before the release. Export execution results as a shareable report with step outcomes - screenshots - and pass/fail summary. Ready to share - no manual assembly.

Leadership asks if you are ready to ship. Product wants to know if checkout is covered. Connect Thunders MCP to any AI assistant and answer from live test data. Build a dashboard if you need one - or get the answer in seconds.

Thunders Copilot reviews your tests like a senior QA would. It catches vague scenarios - suggests edge cases - and spots duplicates. Over time - your suite gets sharper and your tests produce more accurate results.

Frequently Asked Questions

How quickly can I understand why a test failed?

Immediately. When a step fails - the AI persona that executed it explains what happened - what was expected - and where the mismatch occurred. You see this inline for every step. No log parsing needed.

Can I share test results with non-technical stakeholders?

Yes. Export execution results as reports with step outcomes - screenshots - and pass/fail summary. Stakeholders can review quality status without accessing the platform or interpreting raw test data.

How do I get visibility into overall test quality?

Connect Thunders MCP to any MCP-compatible AI assistant (Claude - ChatGPT - Cursor). From there - you can query your test data in natural language - build interactive dashboards - track pass/fail trends - analyze coverage - and run any custom analytics you need. All from live data.

How does Copilot improve my tests?

Thunders Copilot reviews your test descriptions and suggests improvements for clearer intent - better prompts - and more complete validations. It also identifies edge cases and gaps in your scenarios - helping your suite get stronger over time.