Test Management & Collaboration

Structure your tests for any release - regression - or smoke run. Thunders handles execution - results - and bug reporting so your team works from one source of truth. Thunders Copilot helps you spot duplicates - restructure - and keep your suite clean as it grows.

Why choose Thunders for Test Management & Collaboration

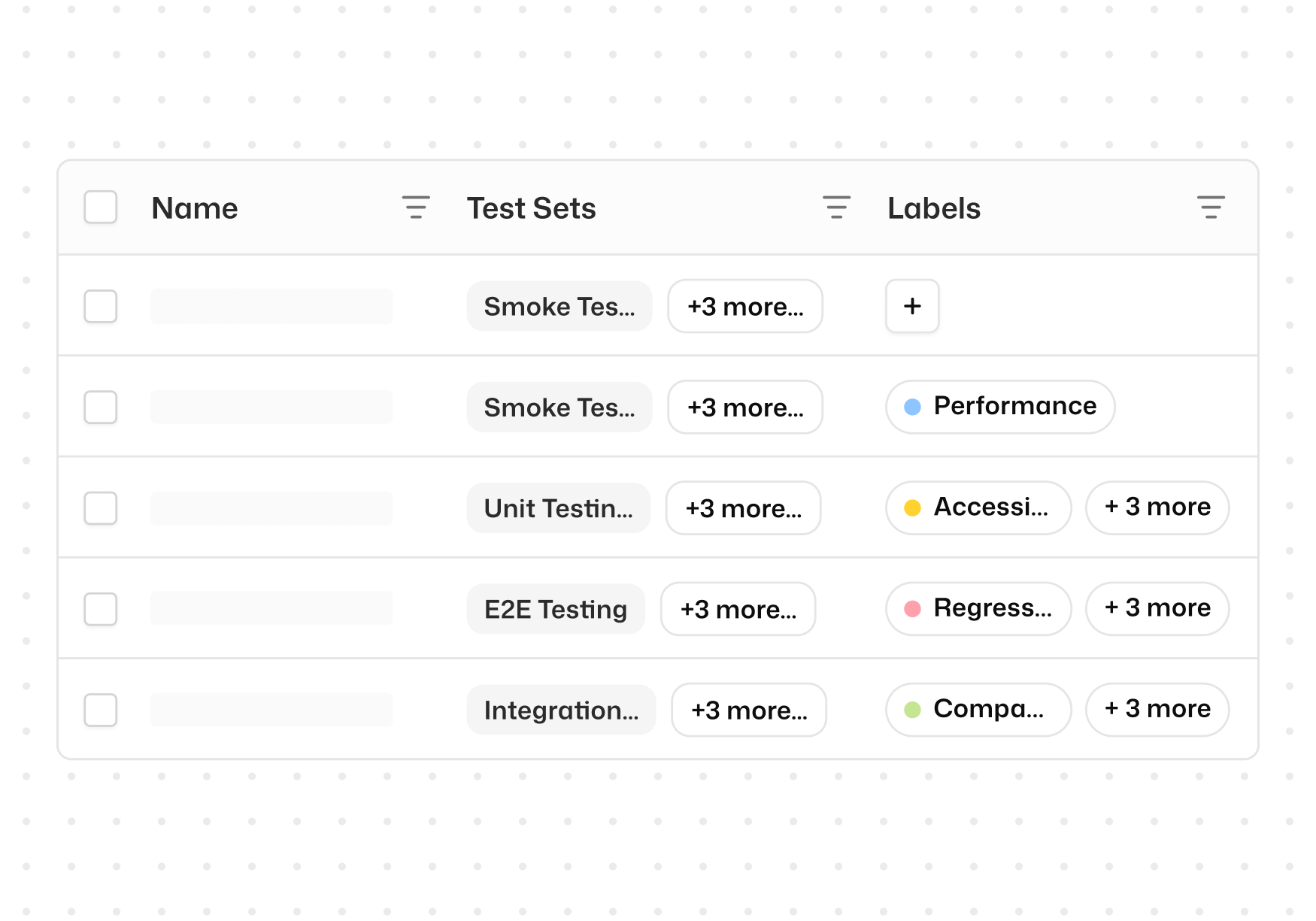

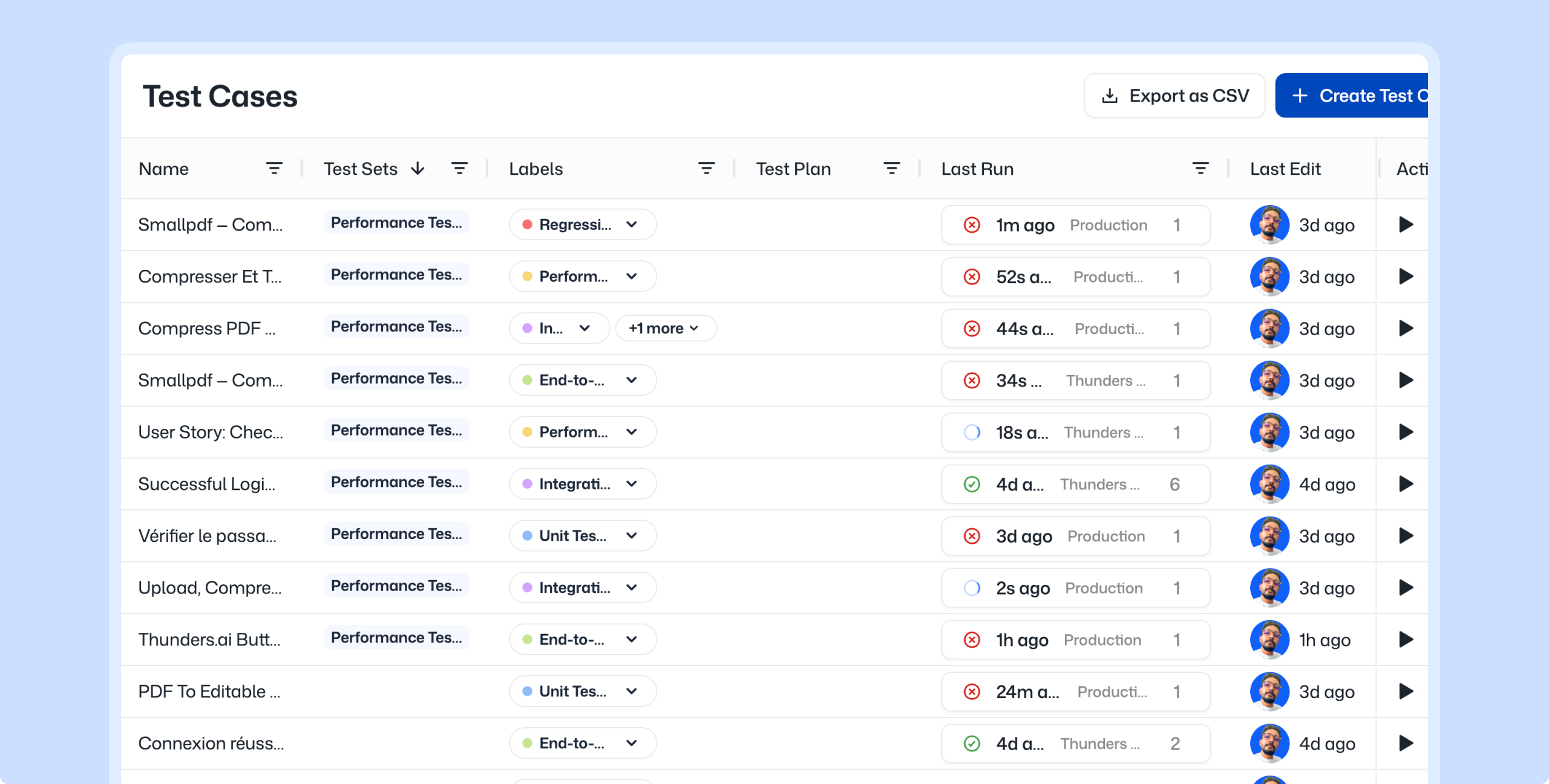

Organize test cases with labels for fast filtering and execution

Tag test cases by feature, priority, sprint, or any category you define. Filter by label to find what you need instantly and execute only the tests that matter for the task at hand.

Group tests into test sets for releases - regression - and smoke runs

Group test cases into Test Sets and set concurrency limits, and run them on demand or automatically.

Execute test sets across browsers - environments - and AI Personas

Run any Test Set across multiple browsers, environments, and AI Personas. Validate functional correctness, accessibility, and security from the same set of tests.

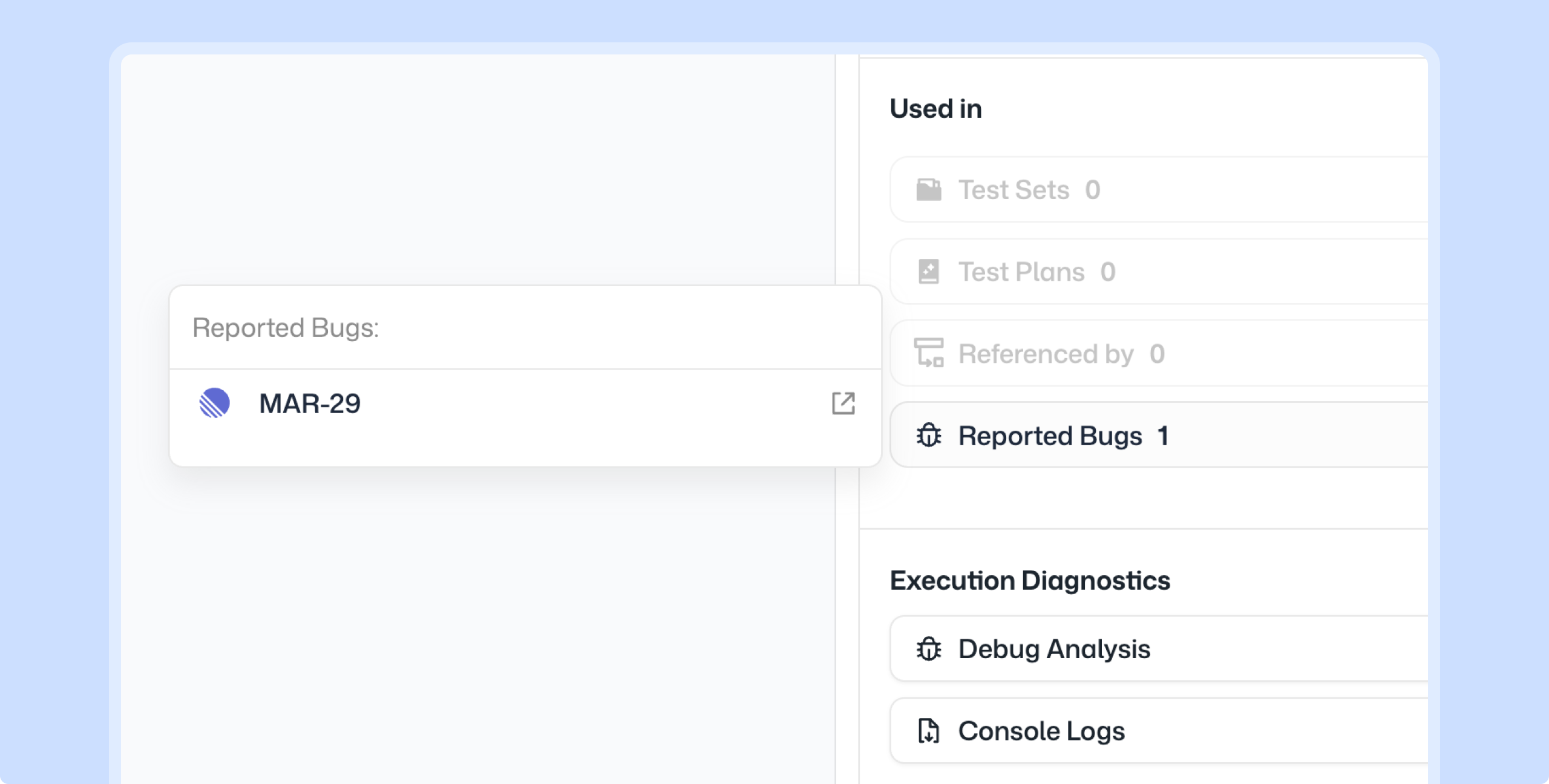

Report bugs to Jira - Linear - or Azure DevOps in one click

Every failed step includes a one-click bug report option. It lands in your issue tracker ready to triage.

Refine and reorganize your test suite with Thunders Copilot

Thunders Copilot analyzes your existing test cases, spots duplicates, suggests a cleaner label taxonomy, and identifies flows that can be consolidated. Your suite stays sharp as it grows.

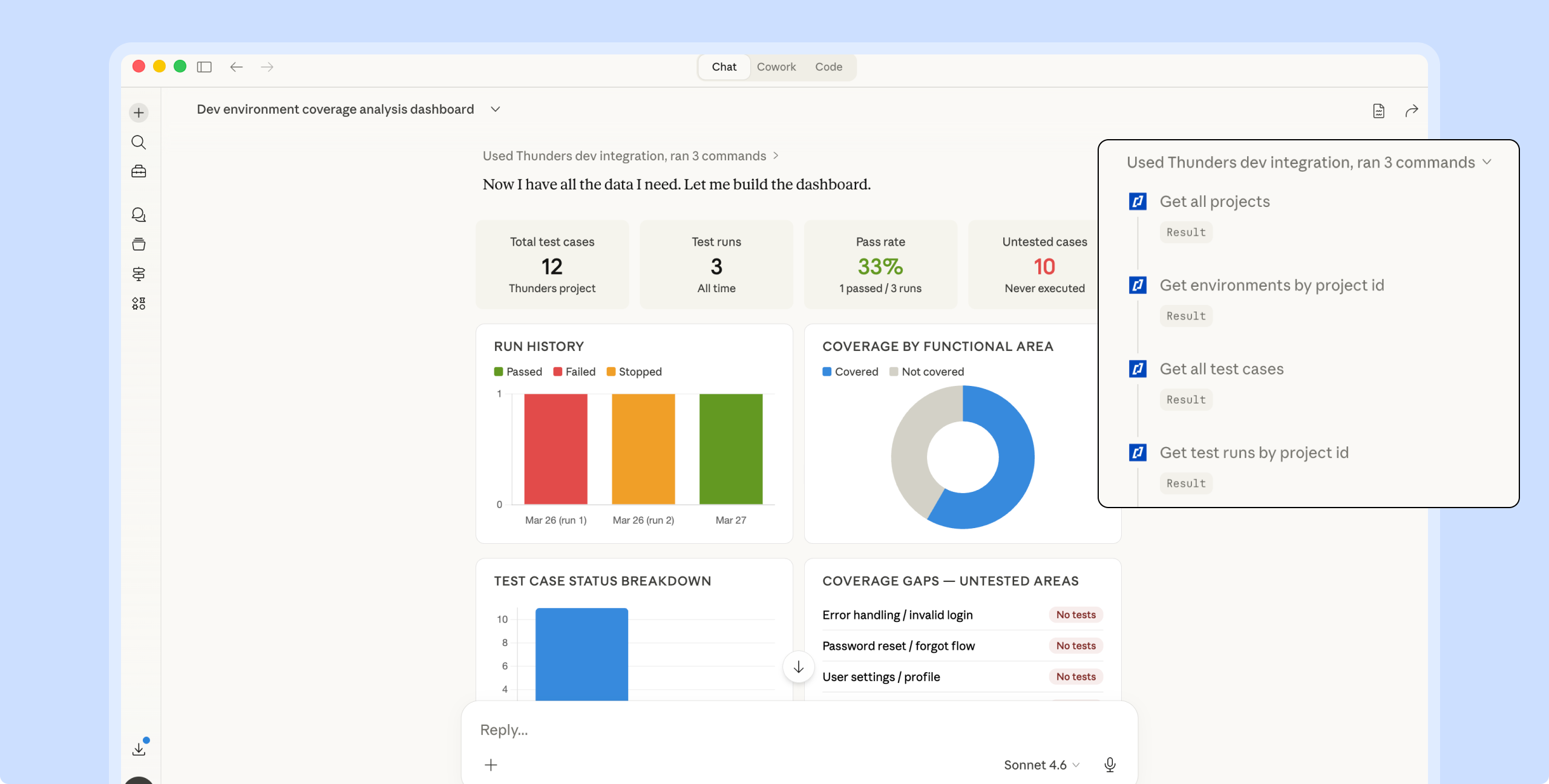

Analyze coverage and testing status through Thunders MCP

Connect any MCP client (Claude, ChatGPT, Cursor) to Thunders and query your testing data in natural language. Answers come from live data, not static reports.

Test Management & Collaboration Use Cases

A growing test suite without structure becomes noise. Labels let you tag test cases by feature - priority - or sprint - then filter and execute just the ones you need. Test Sets group those labeled cases for a specific purpose: a release candidate - a nightly regression - a smoke check. When the suite gets messy - Thunders Copilot analyzes what you have - spots duplicates - and suggests how to restructure. You build the organization once. Every run after that follows it.

A test fails. The expected behavior did not match. Instead of copying screenshots and writing reproduction steps - one click sends a complete bug report to your issue tracker. The report includes the failed step - expected vs. actual outcome - screenshots - and the full execution path. It lands in Jira - Linear - or Azure DevOps ready to be triaged.

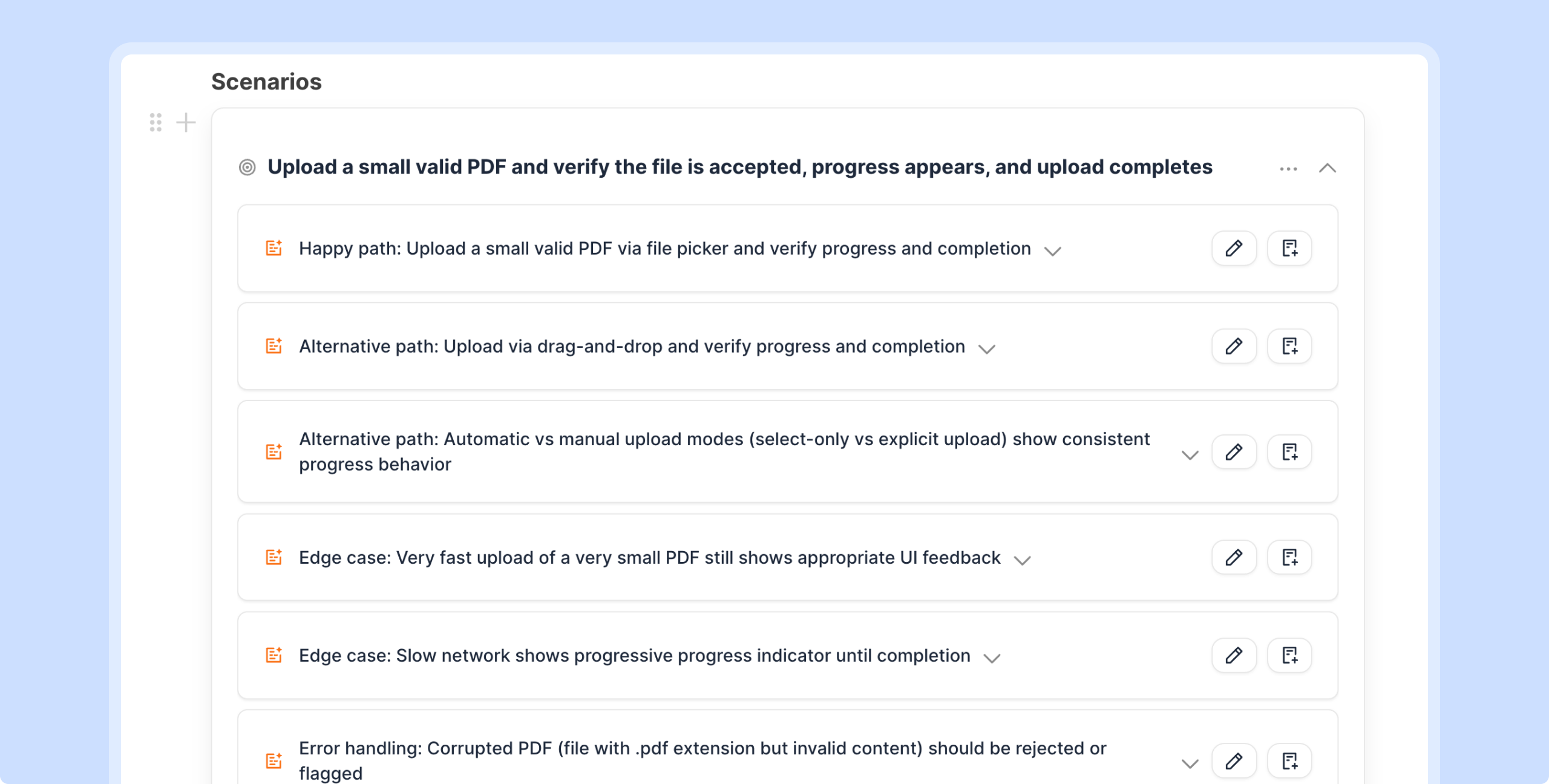

Product teams write specs. QA teams write tests. The translation between the two usually happens in someone's head. Thunders closes that gap. Paste a spec - a PRD - or any product context. Thunders generates a structured test plan with scenarios and edge cases. Edit it collaboratively with AI. Generate runnable test cases directly from the plan. The spec becomes the source of truth for testing.

Product managers need to know what is tested. Engineering leads need pass rates and failure trends. Instead of building dashboards manually - connect any MCP client (Claude - ChatGPT - Cursor) to Thunders MCP. Ask questions in natural language: what features are untested - which test sets are failing - where the gaps are. The answer comes from live data - not a static report.

Now it's the PMs who write test plans in plain language directly in Thunders.

Frequently Asked Questions

How do labels and test sets work together?

Labels tag individual test cases by feature - priority - sprint - or any category you define. Test Sets group test cases for execution: regression suites - release candidates - smoke tests. Filter by label when building a test set - so the two work together. Label for organization. Group into sets for execution.

Can I generate a test plan from a product spec?

Yes. Paste a spec - PRD - or any product context into the Test Plan editor. Thunders generates a structured plan with scenarios and edge cases. Edit it collaboratively with AI - then generate runnable test cases directly from the plan.

How does one-click bug reporting work?

Every failed test step includes a bug report option. The report is pre-filled with the failure context: expected vs. actual result - screenshots - and the execution path. It connects directly to Jira - Linear - or Azure DevOps. No manual reproduction steps needed.

Can I get coverage and testing status insights without a built-in dashboard?

Yes. Connect any MCP client (Claude - ChatGPT - Cursor) to Thunders MCP and query your testing data in natural language. Ask about untested features - failure trends - pass rates - or coverage gaps. The answers come from live Thunders data.